Microservices Architecture in 2026: Building Scalable Distributed Systems

S.C.G.A. Team

April 14, 2026

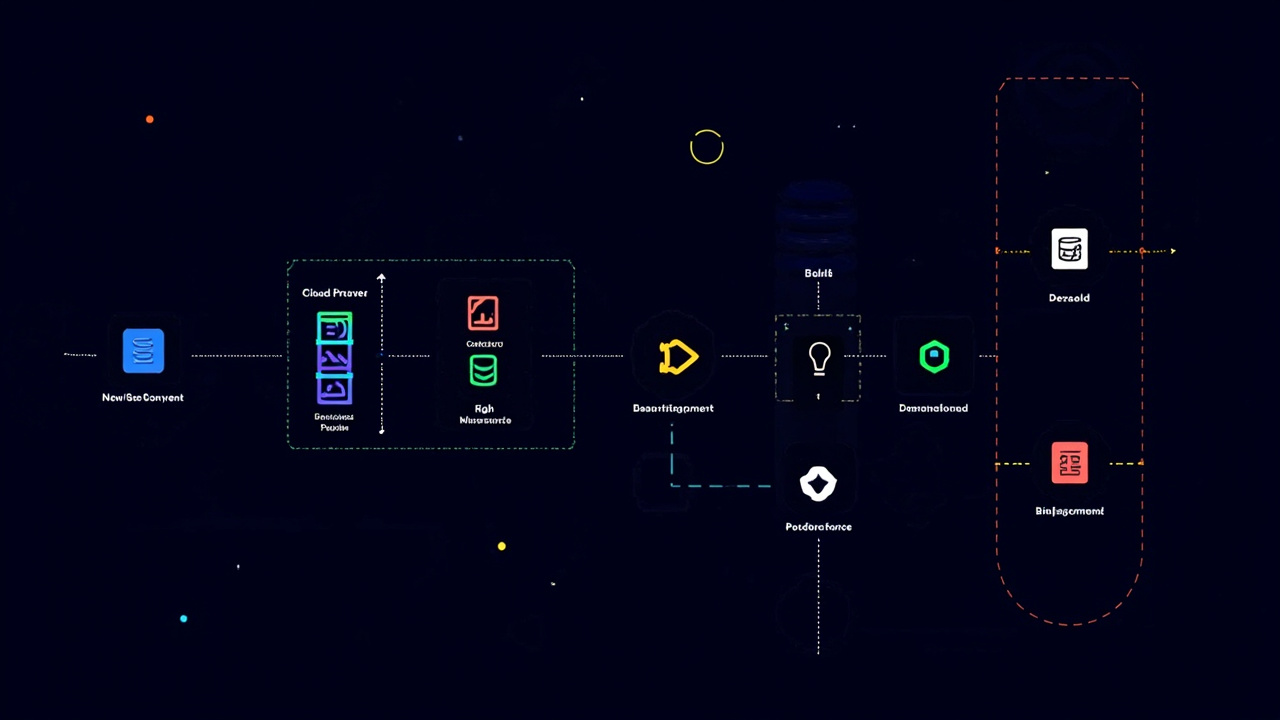

This article explores microservices architecture practices in depth, covering service decomposition strategies, communication patterns, deployment architecture, and monitoring strategies to help development teams successfully transition from monolith to microservices.

Microservices Architecture in 2026: Building Scalable Distributed Systems

In the software development landscape of 2026, microservices architecture has become the preferred approach for building large-scale application systems. With the maturation of cloud-native technologies and containerized deployment, more and more enterprises are beginning to split庞大的單體應用 into multiple independently deployable, scalable microservices. This article delves into the core concepts, practical methods, and common challenges of microservices architecture, helping you build efficient and reliable distributed systems.

What is Microservices Architecture?

Microservices architecture is a design approach that decomposes an application into multiple small, autonomous services. Each service is responsible for a specific business function, with its own database and technology stack, enabling independent development, deployment, and scaling. This architecture contrasts sharply with traditional monolith architecture, where all functional modules are tightly coupled within a single application.

Core characteristics of microservices include: clear service boundaries, independent deployment autonomy, distributed data management, and highly automated operations processes. Each service can scale independently based on its load without affecting the entire system’s operation. This flexibility makes microservices architecture particularly suitable for modern applications requiring high availability and rapid iteration.

Service Decomposition: Finding the Right Balance

Service decomposition is one of the most challenging decisions in microservices architecture. Over-decomposition leads to exponentially increasing system complexity, while under-decomposition loses the core benefits of microservices. Industry-common decomposition principles include: Single Responsibility Principle (each service focuses on a single function), Domain-Driven Design (decomposition based on business domain boundaries), and the smoke test principle (if two features must be modified simultaneously, they should belong to the same service).

In practice, we recommend analyzing from two dimensions: business value and technical complexity. Business functions that change frequently are suitable for independent services for independent iteration; while stable and highly coupled functions can remain in the monolith or larger services. Additionally, team structure is an important consideration—Conway’s Law states that system architecture often reflects the organization’s team structure, so service boundaries should align with team boundaries.

Inter-Service Communication: Balancing Sync and Async

Communication between microservices directly affects system performance and reliability. Common communication patterns include synchronous REST/gRPC calls and asynchronous message queues. Synchronous calls are suitable for scenarios requiring immediate responses, such as user authentication and data queries; while asynchronous communication is suitable for scenarios with longer processing times or requiring eventual consistency, such as order processing and notification delivery.

In 2026 practices, many teams adopt a hybrid communication strategy: core business processes use synchronous calls for real-time responsiveness, while edge functions (such as notifications and log analysis) use asynchronous processing. This approach can ensure clear business logic while leveraging the decoupling advantages of asynchronous communication. Regardless of which communication method is adopted, service failure response strategies need to be prepared, such as setting reasonable timeout values, implementing retry mechanisms, and implementing circuit breaker patterns to prevent cascade failures.

Containerization and Orchestration: The Kubernetes Ecosystem

Containerization is the foundation of microservices deployment. Docker packages applications and their dependencies into standardized container images, ensuring services run consistently in any environment. Kubernetes has become the de facto standard for container orchestration, providing core functionalities such as service discovery, load balancing, automated scaling, and self-healing capabilities.

In Kubernetes environments, each microservice is packaged as a Pod, which can automatically scale based on CPU and memory usage. This elastic scaling capability is key to microservices architecture handling traffic spikes. Additionally, Service Mesh capabilities in Kubernetes (such as Istio and Linkerd) provide more granular traffic management, observability, and security controls, enabling development teams to focus more on business logic rather than infrastructure operations.

Data Management and Distributed Transactions

In microservices architecture, each service owns an independent database, which brings data consistency challenges. Traditional ACID transactions cannot operate across service boundaries, so new data management strategies need to be introduced. The Saga pattern is a common method for handling distributed transactions, achieving eventual consistency through a series of local transactions and compensating operations.

Another important issue is the complexity of data queries. When needing to aggregate data across multiple services, direct service calls become inefficient and fragile. For this, the API Composition pattern and event-driven data replication are common solutions. The former is suitable for scenarios with high real-time requirements, while the latter is suitable for scenarios requiring offline analysis or reconstructing read models. Choosing which solution should be based on business requirements and system constraints for weighing.

Monitoring and Observability: Building Visible Systems

The complexity of microservices architecture makes monitoring and troubleshooting more difficult. Observability has become a core operational requirement, including three key dimensions: Metrics, Logs, and Traces. These three combined can help teams quickly locate problems, understand system behavior, and optimize performance.

In 2026, OpenTelemetry has become the standard framework for observability, providing unified metrics, logs, and trace collection methods. Combined with tools like Prometheus, Grafana, and Jaeger, teams can build a complete observability platform. Additionally, implementing structured logging and distributed tracing needs to be considered from the early stages of service development, establishing unified naming conventions and context propagation mechanisms to ensure the entire system’s behavior can be completely linked and analyzed.

SCGA’s Microservices Practice

As a professional web application and system integration service provider, the SCGA team has extensive microservices architecture practice experience. We help clients smoothly transition from traditional monolith architecture to microservices architecture, designing reasonable service boundaries, implementing automated CI/CD processes, and building complete monitoring systems. Whether it’s designing new systems from scratch or splitting and refactoring existing systems, we can provide professional technical solutions and implementation support.

Microservices architecture brings unprecedented flexibility and scalability to modern application development, but it also puts higher demands on teams’ technical capabilities and operational levels. Choosing the architecture approach that suits you, rationally planning the implementation path, to fully leverage the advantages of microservices and build truly efficient and reliable distributed systems.

Tags: Microservices, Architecture, DevOps, Cloud, Backend

Related Articles: